The opinions in this post are my own, and do not represent my employer.

When undertaking digital transformation initiatives in your organisation, to effectively meet user needs, we need infrastructure technology that can adapt to user needs as fast as we do.

Because this type of technology is fairly new to government, there is a lot of uncertainty about how it can be used, as well as a lot of optimism about the opportunity it brings.

Let’s discuss some of the the risks, myths, and misconceptions we’ve encountered on our journey to fully cloud based infrastructure.

Myth: We can’t store data securely!

AWS is on Australian Signals Directorate’s Certified Cloud Services List alongside several other Infrastructure as a Service (IaaS) providers like Azure. AWS has four services IRAP accredited by ASD up to Unclassified DLM: EBS, EC2, S3, and VPC. If you’ve used AWS in the private sector you might find this catalogue limited, but there are a heap of workloads you can run on AWS with just these four services.

It’s worth noting that ASD acknowledges the risks that come with existing in house systems compared to cloud services. From ASD’s Cloud Computing Security for Tenants guide:

Organisations need to perform a risk assessment and implement associated mitigations before using cloud services. Risks vary depending on factors such as the sensitivity and criticality of data to be stored or processed, how the cloud service is implemented and managed, how the organisation intends to use the cloud service, and challenges associated with the organisation performing timely incident detection and response. Organisations need to compare these risks against an objective risk assessment of using in house computer systems which might: be poorly secured; have inadequate availability; or, be unable to meet modern business requirements.

While these AWS services are accredited up to Unclassified DLM, if you have protected data, there are some strategies you can use to make parts of this data available on AWS.

Most data is classified at the row level in databases. While you can’t put a protected row on AWS, you’ll often find that individual columns in that protected row have a lower classification. This means you can put unclassified columns from protected rows on AWS, and work out a way to match up data between your public systems on AWS, and your private systems on protected networks.

Misconception: We’ll run it like physical infrastructure!

Once you’ve procured AWS, often you’ll want to go for the biggest cost savings immediately. Reserved Instances are a great way to achieve these cost savings, especially if you buy them for a three year period.

But the value of AWS to government is not low-cost compute, it’s on-tap capacity. We can’t extract this value unless we build and run services like AWS recommends. To do this, we have to think differently about our software architectures.

The risk with buying RIs up front for three years is you don’t know what your workloads are going to be three years from now, let alone what architecture you’ll build to deal with them.

You might optimise your code to run in parallel across many cheaper instances. You might shift your workloads to spot instances for ad-hoc calculations.

To achieve a sustainable, controlled spend, you have to start with On-Demand instances, track your spend over several months, and identify instance types that are constantly used.

Then buy RIs for a year.

This works well for both lifting-and-shifting traditional workloads, or for greenfields projects using cloud native architectures. If you’re really keen, purchase RIs for three years, but beware the risk of premature investment in an architecture that may not match your workloads.

If you do find that you’re not using the RIs you’ve purchased, you can sell them on the RI marketplace.

Risk: Our spend is getting out of control!

If you don’t spend time monitoring and analysing your usage of IaaS services, you can very quickly find yourself spending more than you planned.

Use multiple accounts to segment and control your spend.

Consolidated Billing allows you to logically separate services you’re delivering across multiple accounts, but see costs in one place: on the parent account’s billing page. Even better, you can grant your finance teams the ability to view billing information in the AWS console, so they can get straight to the information they need to make informed, financially prudent decisions.

You can take this even further by using blended rates across your AWS accounts, where On-Demand and Reserved Instance are averaged across linked accounts using consolidated billing. This allows you to make reservations once, but automatically get the cost savings across all linked accounts.

Separate accounts are also useful if your service ever gets mogged – just unlink the account and re-link it to the new parent account.

One technical approach to controlling spend is to automatically shut down non-production environments every night, and rebuild automatically in the morning. Because of On-Demand instance pricing is calculated hourly, you’ll halve your cost by only running instances for half the day.

The side effect of this is incentivising a culture of technical resilience. When you’re creating and destroying whole replicas of production systems every day, you become really good at creating and destroying environments, and more importantly automatically handling failure.

There’s also the benefit of better security posture through short-lived environments. By having ephemeral environments, we reduce the impact of one of the three resources required for effective cyber attacks: time. When we rebuild environments daily from fully patched base images, we time limit the window of opportunity for attacks to take hold.

Risk: Our stuff is getting hacked!

As we mentioned before, we can’t extract the value from IaaS providers like AWS unless we build and run services like they recommend. One of the fastest ways to do this is to give your developers full AWS access, to experiment with different ways of building services. By using IAM users, groups, and roles, you can selectively grant your developers the ability to create, update, and destroy environments, fostering that culture of technical resilience.

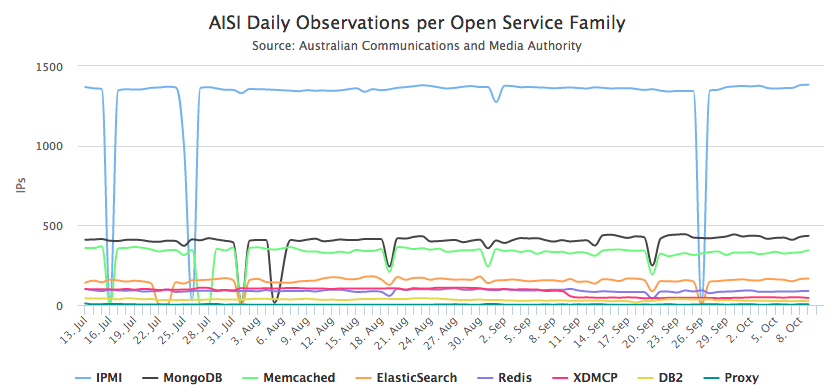

One side effect of this delegation of responsibility is services and data can be accidentally exposed to the world. You just need to take a look at the AISI daily observations per open service family graphs to see how prevalent this problem is across the Australian address space:

One solution is to audit publicly exposed services on your AWS accounts hourly, and automatically notify your people when the automated security monitoring detects something amiss. This is surprisingly easy to do with IaaS: just query the APIs to get a list of IP addresses in use, run a scan against these addresses, and notify owners immediately, all based on tag information on vulnerable hosts, also exposed through the API.

Misconception: We aren’t getting the reliability benefits!

As we mentioned before, we can’t extract the value from IaaS providers like AWS unless we build and run services like they recommend. On IaaS like AWS, we do this by building highly reliable systems from unreliable components.

One very simple way of achieving this is with Auto Scaling Groups. You can use Auto Scaling Groups to ensure a minimum number of like-instances are running, and to scale the number of instances in the group up and down based on demand. This ensures that if any of the underlying instances that make up the Auto Scaling Group fail, they are automatically recreated.

To get the full benefit of Auto Scaling Groups, you need to pre-bake your applications into your instances. Think of it as a frozen pizza – you automate the hard work up-front to get your application and environment ready to go, then the Auto Scaling Group warms them up at the last minute for consumption.

The caveat for this to work is this: you need a strong continuous delivery capability that is highly automated – everything must go to production through the pipeline.

The effect of this is that changes and releases become non-events. You can very quickly reach a point where you’re deploying tens, if not hundreds of changes a day – all with minimal human intervention.

Whenever you make a change to the application or the underlying environment, your systems automatically build new images that can be used in your Auto Scaling Groups. This requires new tools and ways of addressing your infrastructure, programatically.

Having a fully automated change process can make satisfying regulatory requirements easier, because all changes are highly controlled and logged, and each part of the automation has limited access, courtesy of IAM. When you combine this with CloudTrail, you get a pretty powerful combination of access control and auditing.

Now you’re getting multiple benefits: reliability, auditability, scalability, and pertinently, cost reduction – you don’t pay for what you’re not using, calculated by the hour.

Conclusion

The opportunity IaaS provides is immense.

IaaS providers like AWS help make doing the right thing easy. IaaS eliminates classes of problems, freeing up your teams to focus on the bigger picture. Most importantly, it frees people up to help your organisation learn about modern technology practices for building highly reliable government services.